We tend to see the web as a simple thing. A place to get some information, send an email, or publish a detailed report of our day on social media to get some virtual likes. When we want to do a little bit more, we usually turn to desktop software or mobile apps.

Modern web browsers, however, have evolved from the simple pieces of software they were when the web was born to advanced programs crammed with useful APIs. In this article I will explain five things that are possible in (some) modern browsers and illustrate each API with some code examples and a little demonstration.

It’s worth knowing that some of these APIs don’t work in all modern browsers, and should never be the core of your website or web app, but a nice addition. Look at them as the cherry on the top of an already delicious pie.

Sit back, relax and learn why the web is so amazing!

Web Speech API: Speech Synthesis

We’re diving right in with one of the more fun APIs to play around with. Did you know a browser could talk? That’s right, apart from displaying text, it can also convert that text to spoken words.

This API has some handy use cases, like providing a simple screen reader. Granted, most visually impaired people have a more capable screen reader installed on their device. This doesn’t mean that they have access to their own devices all time, and providing a simple screen reader in the browser shows you care about them.

You can of course also use this for some fun marketing purposes, or to have an interactive assistant right there on your website.

Don’t overdo it, though, and always ask the user if they would like some spoken words, or prefer to read text themselves.

Before we look at how to use the Web Speech API, let’s see where we can actually use it. Currently the API is supported in all major browsers: Chrome, Firefox, Edge and Safari.

Ok, it’s time to start writing some code. The following few lines of code are all that’s required to make your browser talk:

const synth = window.speechSynthesis;

const utterance = new SpeechSynthesisUtterance('Hey look, I can talk');

synth.speak(utterance);

We start by locating our speech synthesiser, it’s located in the global window object. Let’s put it in a synth variable for easier use later on.

The second part of the code will create a new SpeechSynthesisUtterance instance. This object will contain the text we want the browser to say, and optionally, some characteristics on how to pronounce this text.

Once we know where to find the speech synthesiser, and we created our speech synthesis utterance, all we need to do is pass the utterance to the synthesizer. That wasn’t so difficult, was it?

I mentioned before that the utterance instance can also contain some characteristics on how to talk. Let’s look a bit deeper into this.

Each browser has a set of default voices we can choose from. Let’s see how you can choose another voice.

const utterance = new SpeechSynthesisUtterance('Hey look, I can talk with a female voice!');

const voices = synth.getVoices()

const voice = voices.find(voice => voice.name === 'Fiona');

utterance.voice = voice

You can find a bunch of different male and female voices, each with its own accent. From the list of available voices, I chose Fiona’s. Once you know which voice you like, it’s as simple as setting that voice as the voice property of your utterance.

It’s also possible to change the voice a bit. The utterance has pitch and speed properties for you to customise.

const utterance = new SpeechSynthesisUtterance('I talk slow, with a higher voice.');

utterance.pitch = 2;

utterance.rate = .7;

Now that we’ve seen how to choose a voice and customise it, let’s throw it all together:

const synth = window.speechSynthesis;

const utterance = new SpeechSynthesisUtterance('Wow, this speech synthesis API is really cool!');

const voices = synth.getVoices();

const voice = voices.find(voice => voice.name === 'Fiona');

utterance.voice = voice;

utterance.pitch = 2;

utterance.rate = .7;

synth.speak(utterance);

See the Pen Speech Synthesis example by Sam (@Sambego) on CodePen.

Web Speech API: Speech Recognition

We’ve seen that modern browsers are capable of talking, but did you know they are also quite capable of listening?

You might expect something as futuristic as speech recognition to be extremely difficult, but browsers have made it really simple. Using the SpeechRecognition interface of the Web Speech API it only takes a few lines of code.

You can use this API for a lot of cases, for example to create a simple personal assistant like Siri or Google Assistant. This assistant could help users with a more personal experience than a help section or some FAQs.

There is a caveat when using this API: it only works when you have an active internet connection. The reason for this is that the API doesn’t do its magic inside the browser. It will send a recording coming from your microphone to a server where it will analyse the sound and try to recognise the words being said. Once that server recognises some words or sentences, it will respond with a string of text, together with a percentage of how certain it is that it recognised all words correctly.

Unfortunately, this is currently only possiblein Google Chrome, but Firefox is working on its implementation.

Now that you’re aware of its limitations, let’s see how we can recognise some spoken words.

const SpeechRecognition = window.SpeechRecognition || window.webkitSpeechRecognition;

const recognition = new SpeechRecognition();

recognition.lang = 'en-US';

recognition.continuous = false;

recognition.interimResults = false;

recognition.onresult = event => console.log(event.results[0][0].transcript);

recognition.onerror = error => console.error(error);

recognition.start();

The speech recognition interfaces are currently prefixed in Chrome, so we’ll need to create a new webkitSpeechRecognition instance if SpeechRecognition is not available, which we can use to configure some parameters.

The lang property sets the language of the text to be recognised. If this property is not set, the browser will use the HTML lang attribute value, or the user agent’s language setting when that attribute is not set.

When setting continuous to true, the browser will listen continuously for spoken words. By default this is turned off and the API will stop listening when it’s done recognising a single piece of text.

By setting the interimResults to true, the browser will return results while it’s processing. Once the API is fairly certain it has the final result, it will send it. The intermediate results will almost always contain mistakes, but it could be useful to get some early feedback.

Of course, we want to be notified when a result is available. By setting an event handler as the onresult property, we can get the results of the API, and do something with it. The same goes for errors, we can catch them with the onerror event handler.

All that’s left is to start listening!

See the Pen Web speech recognition API by Sam (@Sambego) on CodePen.

Media Recorder API

Video chat and voice messages are getting more common lately. Wouldn’t it be nice if there was an easy to use API to record a media stream? It turns out the Media Recorder API makes a job like this a breeze.

We can combine MediaDevices.getUserMedia() to access the user’s webcam or microphone and the Media Recorder API to record data from these devices.

Accessing the user’s webcam and microphone works in all latest versions of all major browsers. Recording media is currently only possible in Chrome and Firefox.

To record a video, we’ll need a little bit more code than the two examples above, so let’s get started.

navigator.mediaDevices.getUserMedia({

video: true,

audio: true,

}).then(stream => {

...

});

By calling getUserMedia we ask permission to access both the user’s webcam and microphone. If you would like to use only one of them, just leave out video or audio from the config object. This will return a Promise which will resolve a media stream. We will pass this stream to our media recorder later.

The getUserMedia method used to be available right from the navigator object. You can still find it there, but it’s deprecated in favour of the method in the navigator.mediaDevices object.

const recorder = new MediaRecorder(stream);

recorder.ondataavailable = saveRecordingChunk;

recorder.onstop = saveRecording;

Once we have access to the user’s microphone and video, we want to create a new MediaRecorder instance, and pass along the stream of incoming video and audio. The MediaRecorder instance has a few event handlers we can configure. We’re going to use the ondataavailable and onstop event handlers in this example.

Every time some new data becomes available, the ondataavailable event handler will be called. In this event handler we will save the newly available data for a later use.

const chunks = [];

const saveRecordingChunk = event => {

chunks.push(event.data);

};

When we stop recording, the onstop event handler will be called. We will use the chunks we saved every time some data became available to create a preview of our recording. The first thing we need to do is create a Blob from the saved chunks. Once we have that Blob we can convert it to an encoded URL. By passing this URL as the source of an HTML5 <video> element, we can view our recording. Of course, if you only recorded audio, you can pass it to an HTML5 <audio> element.

const saveRecording = event => {

const recordingBlob = new Blob(chunks, {

type: 'video/webm',

};const recordingURL = URL.createObjectURL(recordingBlob);

document.querySelector('video').src = recordingURL;

};

Now all that’s left to do is to actually start recording.

recorder.start();

See the Pen Media recorder API by Sam (@Sambego) on CodePen.

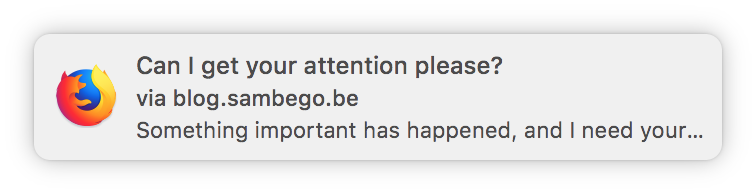

Notification API

When you use a web app and that app wants to send you a notification about something, it usually sends an email with a call to action. If you’ve got the mobile app installed, you might also get a push notification. These are all convenient ways of alerting a user that something has happened, and encourage them to take some action. But what if I told you the web can also send notifications? I’m not talking about the Facebook notification flyout, but actual native toast messages.

The browser support for creating notifications is pretty good. All major desktop browsers are able to do so, and most mobile browsers as well. Only Safari on iOS does not support this yet.

To send these notifications, we first need to get permission from the user. Asking for permissions will usually happen automatically when you use a certain browser API, but not when trying to send a notification. We need to explicitly ask for permission. Once the user has given the permission, the browser will remember it and the permission will stay valid until the user revokes it.

Notification.requestPermission(permission => {

if (permission === 'granted') {

// We can send a notification here

}

});

Now that the user has granted us permission to send notifications, we can create one.

const notification = new Notification('Can I get your attention please?', {

body: `Something important has happened, and I need your attention.`,

icon: 'url/to/a/cat/icon.png',

});

When creating a new Notification instance, you can pass along a title, and some other optional parameters like a body text or icon to easily identify the origin of the notification. The browser will add the URL from which the notification has been sent.

As mentioned before, we don’t only want to focus the user’s attention on something that has happened. We want the user to respond to that event as well. Now that we created a new Notification instance, it’s easy to add a click event handler to it. This will allow the user to click on the notification and execute an action.

notification.onclick = event => {

// Do something cool!

};

It’s important to know, there is a big difference between the push notifications you receive on your phone and the notifications we are creating here. These notifications can only be sent when the user is visiting your website or web app.

It’s possible to send push notifications from the web, using Service Workers, but I won’t go into this here, as it would require an article of its own.

See the Pen Notification API by Sam (@Sambego) on CodePen.

Geolocation API

The last API I want to talk about is the Geolocation API. As the name suggests, it allows you to identify your user’s location. Knowing your user’s location can be useful for a more personal service. For example, you could show news or events close to the location.

Asking for your user’s permission to identify the location can be a sensitive thing to do, especially with users who value their privacy. Always consider whether it adds value to know the location or not.

If you are wondering about the browser support for this API, I’ve got good news. It works in all major browsers.

Now that you know it’s possible to ask for the user’s location, and you’ve decided it’s going to make your website or web app better, let’s see how we can do so.

navigator.geolocation.getCurrentPosition(position => {

console.log('Your current position:', position.coords);

});

We call geolocation.getCurrenctPosition, which will return a Position object. This object contains the coordinates of the user’s location. The two most important properties of this object are latitude and longitude.

If you’re like me (and you probably are), latitude and longitude don’t really mean much to you. Fortunately, there are a lot of services which let you convert these coordinates to a more readable result. Let’s see how we can use the Google Maps API to get a city name from these coordinates.

const latLng =

new maps.LatLng(coordinates.coords.latitude, coordinates.coords.longitude);

const geocoder = new maps.Geocoder();

geocoder.geocode({latLng}, (results, status) => {

if (status === maps.GeocoderStatus.OK) {

console.logs(`You current position is:

${results.find(result =>

result.types.includes('locality'))['formatted_address']}`)

}

});

You can get more detailed information on how to use the Google Maps API on their documentation website.

See the Pen Geolocation API by Sam (@Sambego) on CodePen.

Conclusion

By showing you five things that are possible in a browser, I hope you start to see the potential of the web. Where we used to download and install heavy, platform-specific software, we are now able to visit a website. With Progressive Web Apps being the next big thing, it’s also good to know that the web is more than text and forms.

Not all of these APIs might be available in all browsers, but we need to be patient. The web is evolving at an amazing speed, and browser vendors constantly improve their products. What’s not yet possible today, might be possible in a few months.

If you’d like to know more about what’s possible on the web, I’d suggest you dive into the documentation on MDN and start playing around.

All demos for this article can be found on CodePen.

Now go and impress your users with a talking browser that shows a notification of where they are!

Hi! I’m having trouble with the media recording API. I’m pretty simple, and not a coder – I use things that I find and muddle my way into making them work… But with this I’m stuck! I’ve tried implementing in my wordpress site as a page, but only got a button and the CSS interfered with the site (I pulled the CSS out and still only got the button, just far less pretty).

I made a new html file and attempted it separately as a standalone page. All the javascript, html, and css is in as you’ve posted in the example, but still only a button….

I’m a reseller for you, so you should be able to answer these questions:

Am I missing anything at the backend? Is there more needed than just the code posted? How does my site store the video after the API does its job – in what directory, and with what filenames?

Thank you!

Hey, it looks like your geolocation code isn’t working because it’s embedded in an iframe. Check here for Google’s explanation on the matter. https://sites.google.com/a/chromium.org/dev/Home/chromium-security/deprecating-permissions-in-cross-origin-iframes

Hi Tristan (for some reason I can’t reply in-line on Firefox),

I’m not sure that’s the issue. You will probably have looked at the site and found my test page, but if you go to mediarecordtest.html on the domain then you’ll see it’s just your code put straight into a standard page with no iFrames, and still not working……

I”m sure I’m just being dim on this! Your demo works fine so I know it’s not my browser…

Cheers 🙂

Pip

Would it be easier if I just opened a ticket? 😉